AI for Content Creation Workshop

@ CVPR 2022

19th June 2022

Room 208-210 + Zoom

Summary

The AI for Content Creation (AI4CC) workshop at CVPR 2022 brings together researchers in computer vision, machine learning, and AI. Content creation is required for simulation and training data generation, media like photography and videography, virtual reality and gaming, art and design, and documents and advertising (to name just a few application domains).

Recent progress in machine learning, deep learning, and AI techniques has allowed us to turn hours of manual, painstaking content creation work into minutes or seconds of automated or interactive work.

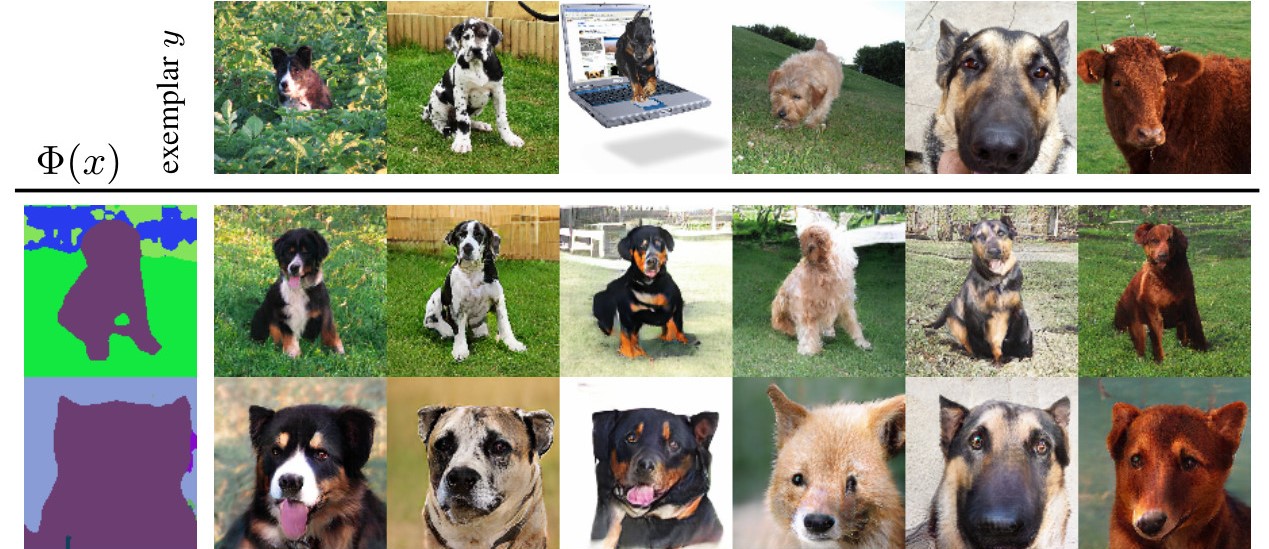

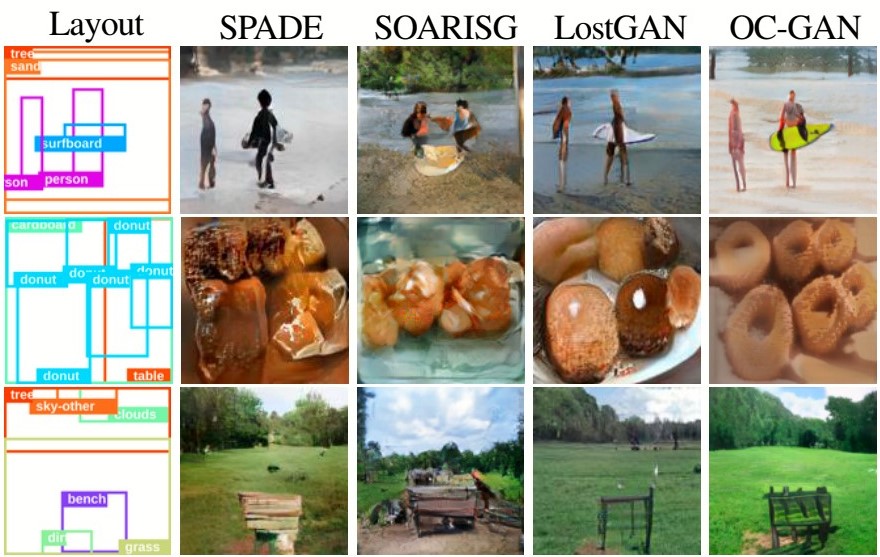

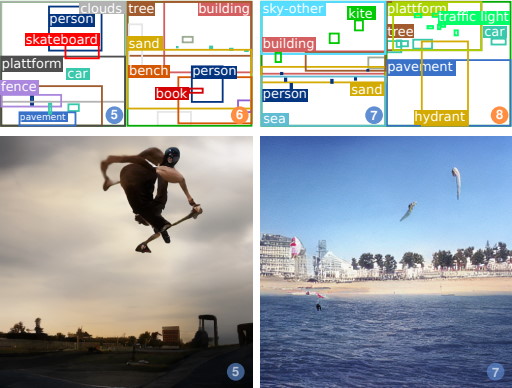

For instance, generative adversarial networks (GANs) can produce photorealistic images of 2D and 3D items such as humans, landscapes, interior scenes, virtual environments, or even industrial designs.

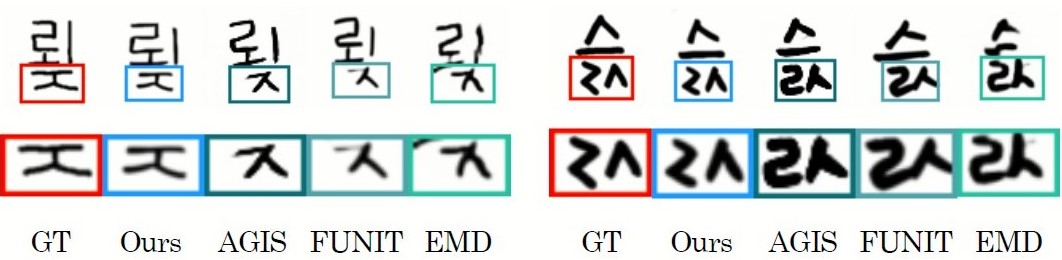

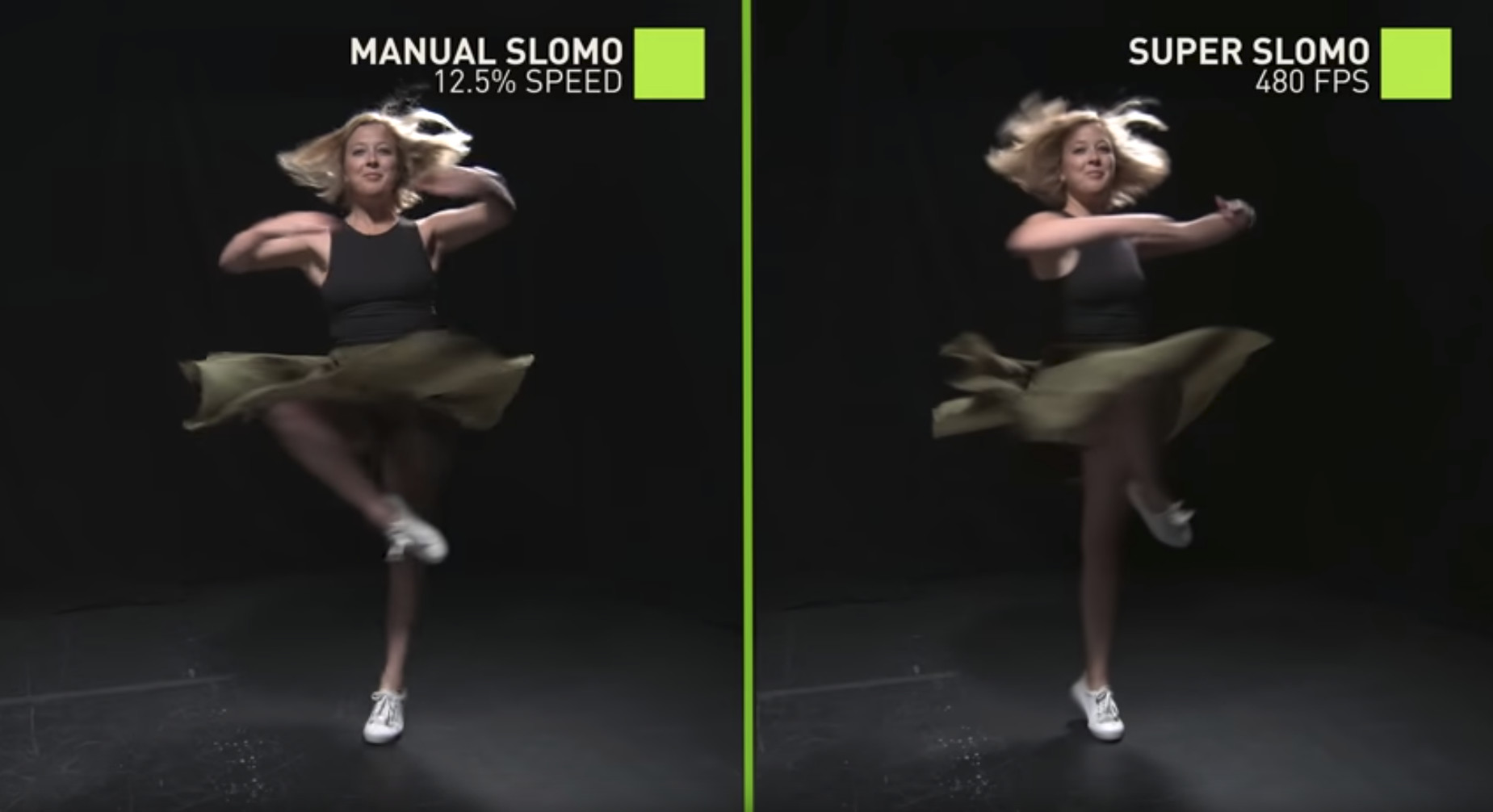

Neural networks can super-resolve and super-slomo videos, interpolate between photos with intermediate novel views and even extrapolate, and transfer styles to convincingly render and reinterpret content.

In addition to creating awe-inspiring artistic images, these offer unique opportunities for generating additional and more diverse training data.

Learned priors can also be combined with explicit appearance and geometric constraints, perceptual understanding, or even functional and semantic constraints of objects.

AI for content creation lies at the intersection of the graphics, the computer vision, and the design community. However, researchers and professionals in these fields may not be aware of its full potential and inner workings. As such, the workshop is comprised of two parts: techniques for content creation and applications for content creation. The workshop has three goals:

- To cover introductory concepts to help interested researchers from other fields start in this exciting area.

- To present success stories to show how deep learning can be used for content creation.

- To discuss pain points that designers face using content creation tools.

More broadly, we hope that the workshop will serve as a forum to discuss the latest topics in content creation and the challenges that vision and learning researchers can help solve.

Welcome! -

Deqing Sun (Google)

Huiwen Chang (Google)

Tali Dekel (Weizmann Institute)

Lu Jiang (Google)

Jing Liao (City University of Hong Kong)

Ming-Yu Liu (NVIDIA)

Cynthia Lu (Adobe)

Seungjun Nah (NVIDIA)

James Tompkin (Brown)

Ting-Chun Wang (NVIDIA)

Jun-Yan Zhu (Carnegie Mellon)

Awards

Best paper

Dongyeun Lee, Jae Young Lee, Doyeon Kim, Jaehyun Choi, Junmo Kim,

Fix the Noise: Disentangling Source Feature for Transfer Learning of StyleGAN | Web+Code

Runner up

Yunseok Jang, Ruben Villegas, Jimei Yang, Duygu Ceylan, Xin Sun, Honglak Lee,

RiCS: A 2D Self-Occlusion Map for Harmonizing Volumetric Objects

Best poster

Remote

Yohan Poirier-Ginter, Alexandre, Ryan Smith, Jean-François LaLonde,

Overparameterization Improves StyleGAN Inversion | Webpage

Physical

Ajay Jain, Ben Mildenhall, Jonathan T. Barron, Pieter Abbeel, Ben Poole,

Zero-Shot Text-Guided Object Generation with Dream Fields | Webpage | PosterAlso published at CVPR 2022